Introduction

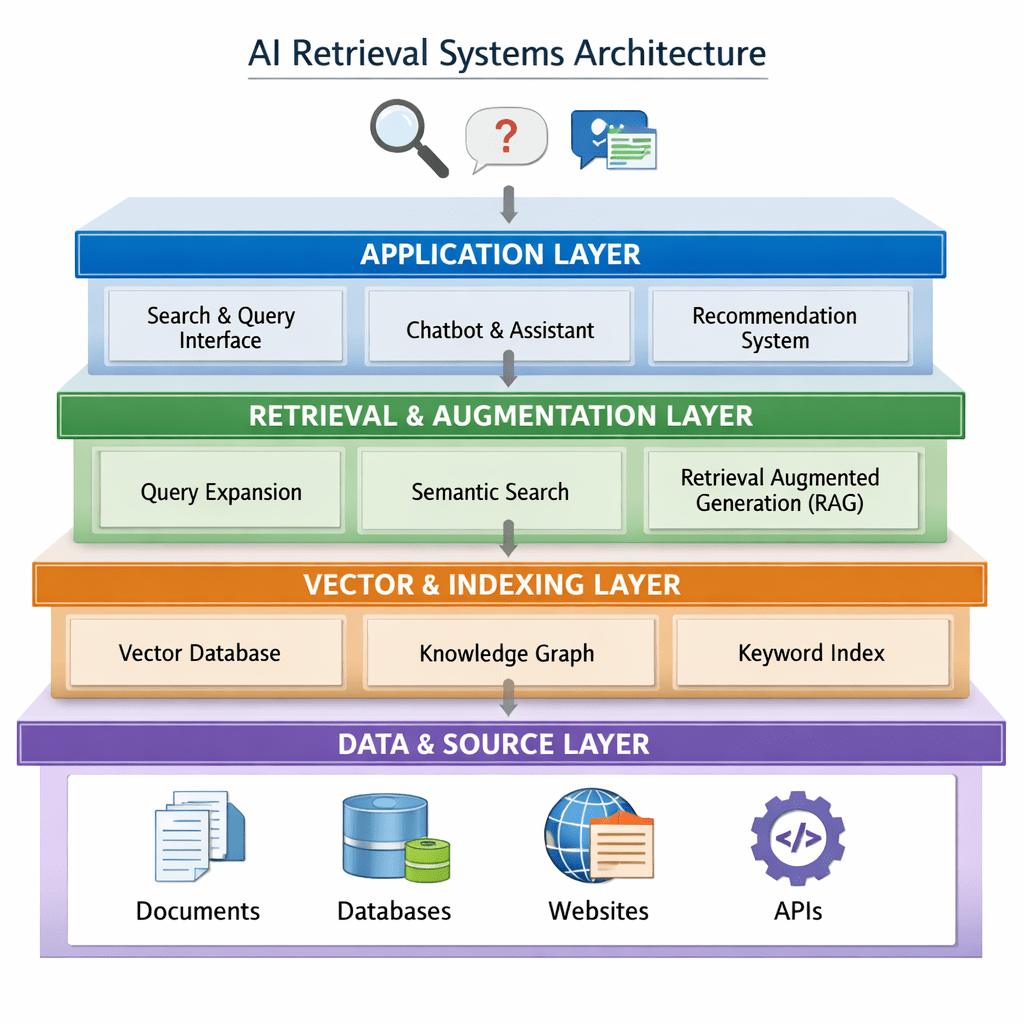

Over the past two years, the rise of large language models (LLMs) has fundamentally reshaped how we build applications. Retrieval is no longer just about keyword search—it’s about semantic understanding, hybrid querying, and real-time reasoning over embeddings.

This shift has introduced a new class of infrastructure: vector databases and AI-native search systems.

However, one of the most common mistakes teams make is assuming all these tools serve the same purpose. In reality, solutions like Pinecone, Qdrant, Weaviate, and Milvus are fundamentally different from hybrid search engines like Amazon OpenSearch Service or NLP-first systems like Amazon Kendra.

With the addition of Amazon OpenSearch Serverless, the landscape has become even more nuanced—offering serverless vector search but with different trade-offs in control, cost, and performance.

This blog breaks down these systems factually and practically, helping you choose the right backend for:

- Retrieval-Augmented Generation (RAG)

- Agentic AI systems

- Enterprise search platforms

- Large-scale embedding pipelines

A Practical Comparison of Modern Retrieval Systems for AI & RAG

| Parameter | Pinecone | Qdrant | AWS OpenSearch | AWS OpenSearch Serverless | Amazon Kendra | ChromaDB | Weaviate | Milvus |

|---|---|---|---|---|---|---|---|---|

| Category | Managed Vector DB | OSS Vector DB | Hybrid Search Engine (cluster-based) | Serverless Hybrid + Vector Engine | NLP Search System | Embedded Vector DB | Hybrid Vector DB | Distributed Vector DB |

| Query Speed | High (consistent latency) | High | Moderate–High (depends on tuning) | High, slight variability | High (NLP optimized) | Moderate | High | High–Very High |

| Indexing Methods | Proprietary ANN (HNSW-like) | HNSW | HNSW (Faiss/NMSLIB) | HNSW (vector engine) | Proprietary ML ranking | HNSW | HNSW + BM25 | HNSW, IVF, IVF_PQ |

| Indexing & Updates | Near real-time | Real-time streaming | Near real-time | Near real-time (serverless refresh) | Batch/connector-based | Fast (small scale) | Continuous ingestion | Batch-optimized |

| Search Accuracy | High | High | Good (hybrid strong) | Good–High | Very high (semantic ranking) | Good | High | Very high |

| Filtering Capability | Good | ⭐ Excellent | Excellent | Excellent | Good | Basic | Strong | Strong |

| Hybrid Search | Good | Good | ⭐ Best-in-class | ⭐ Strong | ⭐ NLP-driven | Limited | ⭐ Strong | Moderate |

| Scalability | Excellent | Good–Excellent | Excellent | ⭐ Excellent (auto-scale) | Fully managed | Limited | Good–Excellent | ⭐ Excellent |

| Multi-Tenancy | Strong | Strong | Strong | Strong | Enterprise-grade | Weak | Strong | Moderate |

| Vector Search | Core | Core | Plugin-based | Native | Limited | Core | Core | Core |

| NLP Capabilities | Minimal | Minimal | Limited | Limited | ⭐ Excellent | Minimal | Moderate | Minimal |

| Real-time Updates | Yes | Yes | Near real-time | Near real-time | No (sync-based) | Yes | Yes | Near real-time |

| Ease of Use | ⭐ Very Easy | Easy | Moderate | ⭐ Very Easy | Easy (user) | ⭐ Very Easy | Moderate | Moderate–Hard |

| Operational Complexity | Very Low | Low | High | ⭐ Very Low | Medium | Very Low | Medium | High |

| Cost Model | SaaS | OSS + cloud | AWS infra pricing | OCU-based | AWS pricing | Free / OSS | OSS + managed | OSS + managed |

| Best Fit | Production RAG | Real-time + filtering | Enterprise hybrid search | Serverless AI search | Enterprise knowledge search | Prototyping | Hybrid AI apps | Massive-scale AI |

Interpreting the Landscape

These tools belong to different architectural categories.

- Pure Vector Databases

→ Pinecone, Qdrant, Milvus

Built for embedding similarity and retrieval pipelines - Hybrid Search Engines

→ OpenSearch, OpenSearch Serverless, Weaviate

Combine keyword + vector + filters - NLP Search Systems

→ Amazon Kendra

Focused on document understanding, not embeddings - Developer-first Embedded DBs

→ ChromaDB

Ideal for local development and experimentation

Understanding this separation is more important than comparing features line-by-line.

Final Verdict: Which One Should You Choose?

| Use Case | Recommended Choice | Why |

|---|---|---|

| Production RAG (fastest time-to-market) | Pinecone | Fully managed, minimal infra, consistent performance |

| Agentic AI / real-time filtering systems | Qdrant | Best-in-class filtering + real-time ingestion |

| AWS-native serverless AI applications | OpenSearch Serverless | No infra, integrates with AWS ecosystem |

| Enterprise hybrid search (full control) | OpenSearch (Provisioned) | Maximum flexibility and tuning |

| Hybrid semantic + structured AI apps | Weaviate | Native hybrid search + schema support |

| Massive-scale vector search (100M–1B+) | Milvus | Distributed, high-performance architecture |

| Enterprise document search (non-RAG) | Amazon Kendra | Strong NLP ranking and connectors |

| Prototyping / local development | ChromaDB | Lightweight and developer-friendly |

Closing Thoughts

There is no universally “best” database in this space—only the one that aligns with your architecture, scale, and operational model.

What matters most is asking the right questions:

- Do you need pure vector retrieval or hybrid search?

- Does your Vector DB requirement align with a cloud-native solution?

- Is real-time ingestion critical?

- Are you optimizing for developer speed or infrastructure control?

- Will your system scale to millions or billions of embeddings?

A quick note:

This comparison is based on my hands-on experience, research, and understanding of the current ecosystem. It reflects my personal perspective as a practitioner and is not sponsored, affiliated, or influenced by any vendor or platform mentioned above.

Leave a comment