Reading Time: 5 minutes

Welcome Guys!

This blog is something special for beginners who wants to setup Big Data cluster in his/her local machine. There are different ways to install BigData Hadoop cluster, if you install straight forward on windows or linux platform you should be careful on setting

up environment variables, configurations, dependencies, may spend more time for troubleshooting etc., instead of that, how about setting up predefined environment or ready made environment just do plug and play? Yes, I’m going to explain this blog for how to setup BigData Hadoop Hortonworks Docker Sandbox on your PC.

Pre-requisites/Virtual box Troubleshooting information:

- Make sure Virtualization is enabled in your BIOS

- Disable Hyper-V acceleration in windows

- Search “Program and Features”-> Turn Windows features on or off ->Locate Hyper-V option and unmark it and it’s sub folders.

- In command prompt, “dism.exe /Online /Disable-Feature:Microsoft-Hyper-V-All”

- Make sure you have enough RAM

- Minimum 16 GB RAM must be available on your deskop/laptop

- Hortonworks requires minium 8GB of Physical RAM on your PC

- Follow the recommend steps given below

Getting Started

How to Host Big Data Hadoop Hortonworks Sandbox:

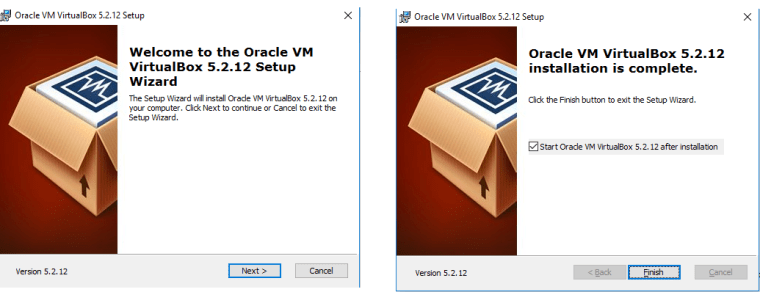

The first step to setup Big Data Hadoop hortonworks Sandbox is, installing Virtualbox.

- Navigate to URL https://www.virtualbox.org/

- Click on download latest version

- You can see virtual platform packages as mentioned below

- Click on Windows hosts to download

- Open the downloaded virtualbox installer, click next to finish the installer

- Now, download HDP(Hortonworks Data Platform) Sandbox by navigating to link https://hortonworks.com/products/sandbox/

-

- Click on “Download HDP Sandbox” button which navigates to the page where OVA image can be downloadable.

- There are different Virtual installer for eg., VirtualBox or VMWARE (This is you have to install VMware Workstation Player) anything is fine, I used Virtualbox.

- The minimum size is more than 9-10 GB, hence it take time depends on your internet speed

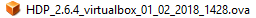

- After downloaded,

click on this ova file which actually popup Virtual box prompting you to import ova file

click on this ova file which actually popup Virtual box prompting you to import ova file

- Note, the above import minimum 8 GB RAM required on your machine. Click on import, which exactly take sometime to import all stuffs.

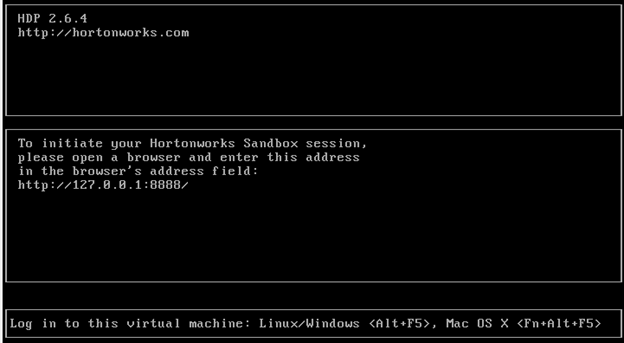

- After import is done, you can see the following image indicates import is success and Big Data Sandbox is ready to play further.

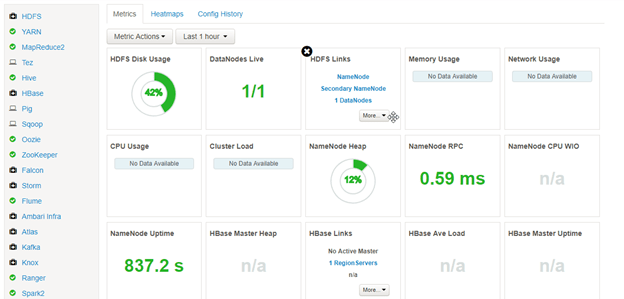

- To verify Big Data Hortonworks Sandbox is success, Launch a browser and enter http://127.0.0.1:8888 where Ambari is running

- It navigate to main page, click Dashboard

- Ambari login page returns where you need to add credentials maria_dev/maria_dev

- After success, you can see all services are running as mentioned below.

How to Connect BigData Hadoop Sandbox Machine:

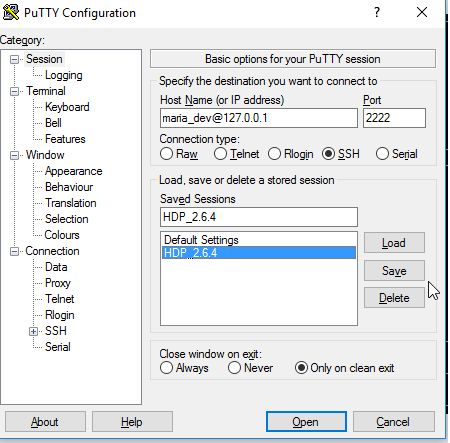

- Open putty (install it if you don’t have in your machine)

- Configure as mentioned below, port “2222” is opposed of 22 which connect HDP 2.6 Big Data Hadoop echo system

- Click open

- In command prompt, enter password “maria_dev”

- Sandbox machine gets connected where you can interaction for file operations or copying required files etc.,

How to Shutdown HDP Sandbox:

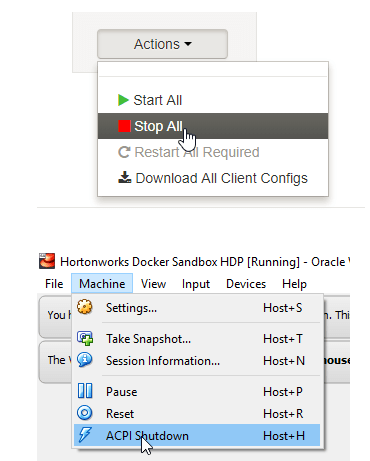

- In Ambari, Actions under bottom left, Select Stop All service which is first point for safest way to shutdown all services in sandbox and then click on ACPI Shutdown.

That’s it, Congratulations! you have made it :-).

Thanks for your time on reading this blog. Hope! this may be helpful to you.

Any questions or clarifications, please contact automationcalling@gmail.com.

http://127.0.0.1:8888” – Is this the standard path to access Ambari?

LikeLike

I modified typos, the standard part is http://127.0.0.1:8888/ The same prompt you can see in loading window which was attached in article (or) replace with localhost:8888 and try it.

LikeLike